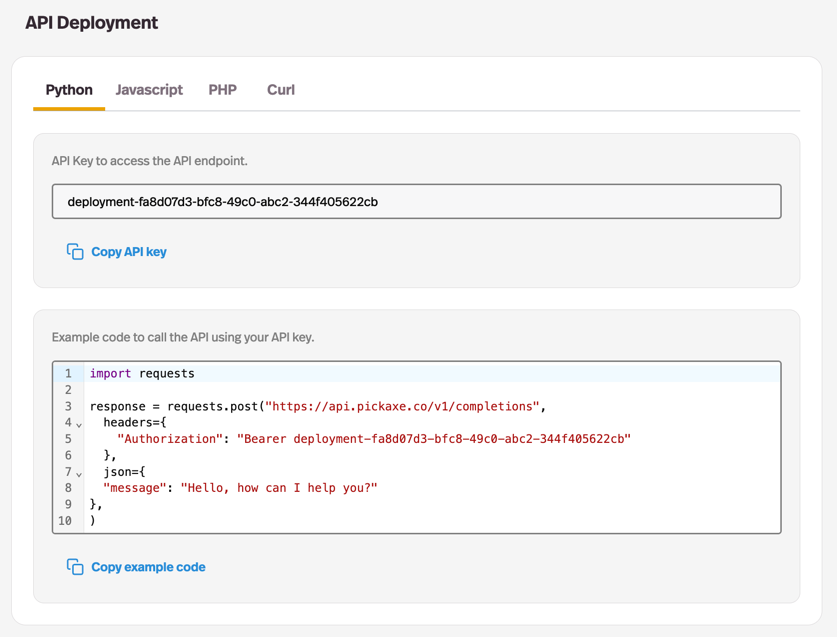

The Pickaxe Agent API is a programmatic interface to any agent you build on Pickaxe. Instead of clicking through a chat UI or embedding a widget, you call a single HTTP endpoint with a message — and you get back the agent's reply, conversation memory, tool results, citations, and token usage in one clean JSON response.

It's designed for builders who want all the work an agent does — prompt management, knowledge base retrieval, tool orchestration, conversation memory, cost tracking — without writing the orchestration layer themselves. You build the agent visually in Pickaxe, then ship it into your app, your iOS extension, your Zapier flow, your Raycast plugin, your custom Slack app — three lines of code and you're calling a real production agent.

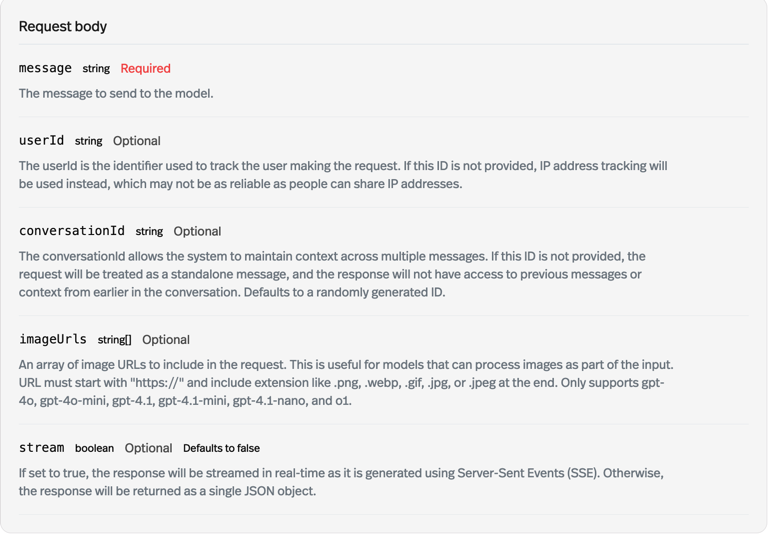

The API supports streaming and non-streaming on the same endpoint, server-side conversation IDs (so the agent remembers across calls), webhooks for long-running runs, idempotency keys for safe retries, and per-agent rate limits visible in your dashboard. Every response includes a usage block so per-call cost is predictable and forecastable. Pricing flows through Pickaxe credits — no BYOK, no surprise model-provider invoices.

Use the Agent API for custom mobile and web apps, internal tools, automation flows in Zapier or Make, custom Slack and Discord integrations, on-device voice assistants, and any case where you need an agent endpoint behind a programmatic call. Pair with Pickaxe's WhatsApp, email, Slack, embed, and Agent Pages deployments — all running off the same agent brain — for full omni-channel coverage.